As neural networks grow in influence and capability, understanding the mechanisms behind their decisions remains a fundamental scientific challenge. This gap between performance and understanding limits our ability to predict model behavior, ensure reliability, and detect sophisticated adversarial or deceptive behavior. Many of the deepest scientific mysteries in machine learning may remain out of reach if we cannot look inside the black box.

Mechanistic interpretability addresses this challenge by developing principled methods to analyze and understand a model’s internals–weights and activations–and to use this understanding to gain greater insight into its behavior, and the computation underlying it.

The field has grown rapidly, with sizable communities in academia, industry and independent research, dedicated startups, and a rich ecosystem of tools and techniques. Following our workshops at ICML 2024 and NeurIPS 2025, this edition at ICML 2026 aims to bring together diverse perspectives from the community to discuss recent advances, build common understanding and chart future directions.

The Call for Papers is now closed — thanks to everyone who submitted! Authors will be notified of acceptance by June 12th (AOE).

The first Mechanistic Interpretability Workshop (ICML 2024).

Organizing Committee

Neel Nanda

Google DeepMind

Andrew Lee

Harvard

Andy Arditi

Northeastern University

Stefan Heimersheim

Adecco

Anna Soligo

Imperial College London

J Rosser

University of Oxford

Iván Arcuschin

Poseidon Research

Taero Kim

Yonsei University

Sungjun Lim

Yonsei University

Questions? Email mechinterpworkshop@gmail.com

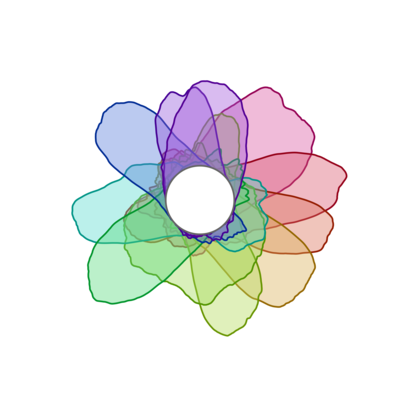

What are those beautiful rainbow flower things?

These are visualizations of "curve detector" neurons from early mechanistic interpretability research. Learn more in the Curve Detectors article on Distill.